Brief Synopsis

The SDK 2.2 is not major upgrade it has brought more features around remote debugging in cloud which was a big ask till now & Windows Azure Management Libraries from a Developer per say. The Windows Azure Service Bus partition queue and topics multiple message broker will help in better availability, as each queue or topic is assigned to one message broker which is single point of failure, now with the new feature of multiple message broker assigned to a queue or topic. Please read the Q&A at the end to know the nuances with the same & the approach to move to Azure SDK 2.2 from generic project stand point of view

High Level following are the new features

- Visual Studio 2013 Support

- Integrated Windows Azure Sign-In support within Visual Studio

- Remote Debugging Cloud Services with Visual Studio – Very Relevant to Developers

- Firewall Management support within Visual Studio for SQL Databases

- Visual Studio 2013 RTM VM Images for MSDN Subscribers

- Windows Azure Management Libraries for .NET – Very Relevant to Deployment Team

- Updated Windows Azure PowerShell Cmdlets and ScriptCenter

- Topology Blast – Relevant to Deployment Team

- Windows Azure Service Bus – partition queues and topics across multiple message brokers – Relevant to Developers. All Service Bus based projects have to move ASAP.

Below covered are only the highlighted areas.

Remote Debugging Cloud Resources within Visual Studio

Today’s Windows Azure SDK 2.2 release adds support for remote debugging many types of Windows Azure resources. With live, remote debugging support from within Visual Studio, you are now able to have more visibility than ever before into how your code is operating live in Windows Azure. Let’s walkthrough how to enable remote debugging for a Cloud Service:

Remote Debugging of Cloud Services

Note: To debug the web or worker role should be on Azure SDK 2.2

To enable remote debugging for your cloud service, select Debug as the Build Configuration on the Common Settings tab of your Cloud Service’s publish dialog wizard:

Then click the Advanced Settings tab and check the Enable Remote Debugging for all roles checkbox:

Once your cloud service is published and running live in the cloud, simply set a breakpoint in your local source code:

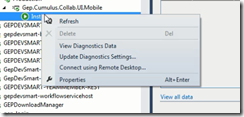

Then use Visual Studio’s Server Explorer to select the Cloud Service instance deployed in the cloud, and then use the Attach Debugger context menu on the role or to a specific VM instance of it:

Once the debugger attaches to the Cloud Service, and a breakpoint is hit, you’ll be able to use the rich debugging capabilities of Visual Studio to debug the cloud instance remotely, in real-time, and see exactly how your app is running in the cloud.

Today’s remote debugging support is super powerful, and makes it much easier to develop and test applications for the cloud. Support for remote debugging Cloud Services is available as of today, and we’ll also enable support for remote debugging Web Sites shortly.

Windows Azure Management Libraries for .NET (Preview)- Automating PowerShell

Windows Azure Management Libraries are in Preview!

What do Azure Management Libraries provide, the control of creation, deployment and tear down resources which previously has been at a PowerShell level now will be available in the code.

Having the ability to automate the creation, deployment, and tear down of resources is a key requirement for applications running in the cloud. It also helps immensely when running dev/test scenarios and coded UI tests against pre-production environments.

These new libraries make it easy to automate tasks using any .NET language (e.g. C#, VB, F#, etc). Previously this automation capability was only available through the Windows Azure PowerShell Cmdlets or to developers who were willing to write their own wrappers for the Windows Azure Service Management REST API.

Modern .NET Developer Experience

We’ve worked to design easy-to-understand .NET APIs that still map well to the underlying REST endpoints, making sure to use and expose the modern .NET functionality that developers expect today:

- Portable Class Library (PCL) support targeting applications built for any .NET Platform (no platform restriction)

- Shipped as a set of focused NuGet packages with minimal dependencies to simplify versioning

- Support async/await task based asynchrony (with easy sync overloads)

- Shared infrastructure for common error handling, tracing, configuration, HTTP pipeline manipulation, etc.

- Factored for easy testability and mocking

- Built on top of popular libraries like HttpClient and Json.NET

Below is a list of a few of the management client classes that are shipping with today’s initial preview release:

| .NET Class Name | Supports Operations for these Assets (and potentially more) |

| ManagementClient | Locations |

| ComputeManagementClient | Hosted Services Deployments Virtual Machines Virtual Machine Images & Disks |

| StorageManagementClient | Storage Accounts |

| WebSiteManagementClient | Web Sites Web Site Publish Profiles Usage Metrics Repositories |

| VirtualNetworkManagementClient | Networks Gateways |

Automating Creating a Virtual Machine using .NET

Let’s walkthrough an example of how we can use the new Windows Azure Management Libraries for .NET to fully automate creating a Virtual Machine. I’m deliberately showing a scenario with a lot of custom options configured – including VHD image gallery enumeration, attaching data drives, network endpoints + firewall rules setup - to show off the full power and richness of what the new library provides.

We’ll begin with some code that demonstrates how to enumerate through the built-in Windows images within the standard Windows Azure VM Gallery. We’ll search for the first VM image that has the word “Windows” in it and use that as our base image to build the VM from. We’ll then create a cloud service container in the West US region to host it within:

We can then customize some options on it such as setting up a computer name, admin username/password, and hostname. We’ll also open up a remote desktop (RDP) endpoint through its security firewall:

We’ll then specify the VHD host and data drives that we want to mount on the Virtual Machine, and specify the size of the VM we want to run it in:

Once everything has been set up the call to create the virtual machine is executed asynchronously

In a few minutes we’ll then have a completely deployed VM running on Windows Azure with all of the settings (hard drives, VM size, machine name, username/password, network endpoints + firewall settings) fully configured and ready for us to use:

TopologyBlast

This new functionality will allow Windows Azure to communicate topology changes to all instances of a service at one time instead of walking upgrade domains. This feature is exposed via the topologyChangeDiscovery setting in the Service Definition (.csdef) file and the Simultaneous* events and classes in the Service Runtime library.

Windows Azure Service Bus – partition queues and topics across multiple message brokers

Service Bus employs multiple message brokers to process and store messages. Each queue or topic is assigned to one message broker. This mapping has the following drawbacks:

· The message throughput of a queue or topic is limited to the messaging load a single message broker can handle.

· If a message broker becomes temporarily unavailable or overloaded, all entities that are assigned to that message broker are unavailable or experience low throughput.

Q&A

Q. Can I use Azure SDK 2.2 to debug Web Role , Worker Role using earlier SDK.

A. No, you need to have your roles migrated to SDK 2.2. For older role you can only get the diagnostic information out of Visual Studio if installed 2.2.

Q. What are typical issues while migrating from 1.8 to 2.2?

Worker Roles and Web Role Recycling

I have 3 worker roles and a web role in my project and I upgraded it to the new 2.2 SDK (required in VS2013). Ever since the upgrade, all of the worker roles are failing and they instantly recycle as soon as they're started.

Post can be found here- http://stackoverflow.com/questions/19717215/upgrade-to-azure-2-2-sdk-is-causing-roles-to-fail

Not able to update the Role after upgrading

I recently worked on an issue where the following error was being thrown while deploying the upgraded role to Windows Azure. You just upgraded the SDK to 2.1 or 2.2 and you start getting the following error while deploying the role.

Link to the post http://blogs.msdn.com/b/cie/archive/2013/10/31/not-able-to-upload-role-after-upgrading-the-sdk.aspx

Q. Steps to Migrate to Azure SDK 2.2

A. Open the Azure project in Visual Studio 2012,

- For upgrading your project is via the Properties windows of the Cloud Project. You will see the following screenshot.

- Follow through the upgrade process fix the errors,

- Run the Project locally to see if any errors fix the same.

- Check In the code post all fixes

- Test the same in Dev. environment to see if this breaking. There is potential chance of breaking due to dependencies.

Note: The web & worker role tend go into inconsistent state due library dependency mismatch. This will have to fixed

Generic Migration to Azure SDK 2.2- High Level Approach

The suggested approach is to start with one component, one web role and one worker role WCF Rest and see the impact in terms of issues and then decide then timelines for others. The POC will be done in 1 Sprint, the candidate are the following

- Component – Reusable Component

- Web Role – Portal Web

- Worker Role – Portal Worker

Links

· Installation of Azure SDK 2.2 - http://www.windowsazure.com/en-us/downloads/archive-net-downloads/