Financial investments in the capital markets in the form of equity, options or commodities one often looks at ways to maximize the profits. Most start with the rudimentary ways as tips from analyst and eventually start digging deeper below the surface to understand technical indicators. The technical indicators at a level 100 does make a lot of sense. Taking these technical indicator and pushing the boundaries of analysis with multi year data OR real time quant trading data one can find many new opportunities to make money. Of course a full fledged equity market prediction needs multiple data sources both historical and real time.

Historical data with some real-time market data with machine learning can help in coming up with reasonable prediction accuracy.

Code on github can be found here https://github.com/ajayso/ML-Regression-Analysis.

Generalized Linear Models

In the earlier post we covered linear regression(LR), In LR

1. We assumed that y data points have a normal distribution.

2. The mean of the y data points lies on the line.

Linear regression assumes a normal distribution of the data and go with a line where mean of the y data points lies on that line, However in certain situation we may desire to go with different types of data distribution example Binomial, Poisson , Hypergeometric etc..

A Briefer on data distribution

A brief on data distribution can be found here

When delving into broader set of data distribution we resort to a support for different types data distribution models. The next is to generalize the above model this can be done generalize the distribution that’s the y , the functions of the explanatory variables – x and finally how to link explanatory variables to the mean of the distribution, this is the basic idea of Generalized Linear Models.

The generalized linear models (GLMs) are a broad class of models that include linear regression, ANOVA, Poisson regression, log-linear models etc. The table below provides a good summary of GLMs

Predicting Buy/Sell for Gold

Code Example for GLM

Problem Statement

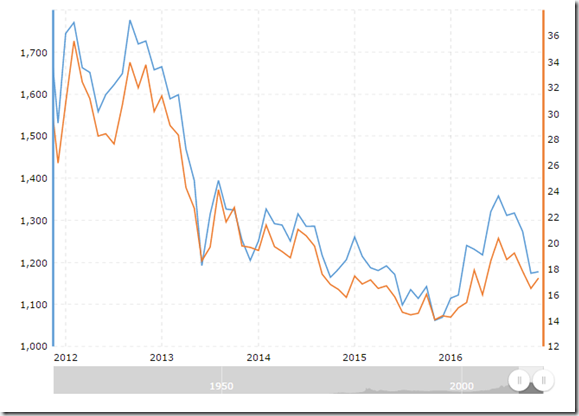

Given historical price data up to and including a given day, the idea to play around with the gold price (historical) with primary technical indicators can help you fix of gold price the following day will increase or decrease relative to the current day’s price fix. The predictions are based primarily on technical indicators calculated from historical price data for gold as well as for a variety of financial variables.

Feature Definition

The price data alone provides limited insights into the future price movement. The idea here is to identify the right feature set that best captures the movement of the gold prices and provides information not about the past and current movements but should be in a position to predict the future movement with a reasonably good accuracy.

Technical Indicators

Trendlines

Trend does provide vital information hence the current trend is vital for the accuracy of the prediction of the future prices. Trends can provide information of the continued price movement and more importantly it can prove useful for trend reversal (uptrend or downtrend). However, the gold prices cannot depend on the trend alone attributing to the fluctuations which are driven by multiple other variables or factors, unearthing these can be differentiator for a good model. Trend can be one of the variable in addition to many others to get a high accuracy for the prediction model.

The simple linear regression with least squares cost function will help to fit the trend line over last n days. The equation of the same is as indicated below.

y = -0.0394777790495269 * x + 1881.30223607803

y is the gold price, x is rate of change here.

The R implementation we have a user defined function written called slope

Rate of Change

Momentum measures the difference between the price on day x and price n days before.

ROC is calculated as - ROCn(x) = P(x) – P(x-n)/ P(x-n)

ROC can indicate the trend if > 0 it’s an uptrend < 0 it’s a downtrend. ROC over a period gives a more definite in witnessing a weak or a trend reversal.

Calculating ROC in R we use the TTR package which gives reasonable good functions for ROC.

Ratios

Ratio between ROC calculated over different time intervals particularly

(ROCn/ ROCm where m < n) it lends insight on how the change in price is changing over time.

The R implementation we have a user defined function written called ratios.

Stochastic Oscillator

It is used to determine the overbought or oversold levels of a stock or commodity, Overbought means the means the price is increased significantly over a short period and may be artificially high, this means the underlying asset is overvalued and the market will soon adjust bringing the price back down. For a better understanding on stochastic oscillator refer here.

The stochastic oscillator assumes that in uptrends, prices will close near the upper end of the recent price range and downtrend will close near the lower end. Adapting this to use the daily price fix rather than close prices.

Calculating the oscillator as follows on a day x over an n day period as follows.

Ln = lowest price over past n days

Hn= highest price over past n days

P(x) = price on day x

%K = (P(x) – Ln)/ (Hn – Ln) x 100%

If %K is less than 20% generate a buy signal and sell if greater than 80%.

The R implementation we have a user defined function written called Stochastic_Oscillator.

Basic Feature Selection

The final list of feature we have is

- Slope

- ROC

- Ratios of ROC

- Oscillator for period of 14 – We use oscillator function to determine the Buy ,Sell and stored in BuySellFlag

Gaussian Regression

Using the set of features selected above, the first algorithm generalized liner model GLM in R can be found here. We pick the data from 2014 onwards, The technical indicators alone may not be enough. But however for the example we are using the same. Considering Gaussian distribution is more or less normal distribution and more over we are predicting the Buy , Sell and Hold on gold based on the daily price and indicators input. We could not use logistic regression as we have more then 2 outcomes here.

The Gaussian Regression on GLM gives an accuracy of 15.78% which is not acceptable.

So further digging we decided to use Multinomial logistics regression, which is a linear regression analysis to conduct when the dependent variable is nominal with more than two levels. Thus it is an extension of logistic regression.

The multinomial logistics regression gives an accuracy of 90.131% which is reasonably acceptable.

In conclusion we can predict the gold buy , sell or hold signal daily at 90% accuracy ratio.

However this may not be enough in case one decides to take the accuracy up further we need to include more data

Intermarket Variables

Gold prices, and commodity prices in general, may also be related to other financial variables. For instance, gold prices are commonly thought to be related to stock prices; interest rates; the value of the dollar; and other factors. Therefore, I wanted to explore whether these other variables could be effective inputs to the prediction of gold prices. I collected the following data over the same time period as the gold price fix data, from early 2007 to late 2013:

- USStockIndices: Dow Jones Industrial Average (DJI); S&P 500 (GSPC); NASDAQ Composite (IXIC)

- WorldStockIndices: Ibovespa (BVSP); CAC 40 (FCHI); FTSE 100 (FTSE); DAX (GDAXI); S&P/TSX Composite (GSPTSE); Hang Seng Index (HSI); KOSPI Composite (KS11); Euronext 100 (N100); Nikkei 225

- (N225); Shanghai Composite (SSEC); SMI (SSMI)

- COMEX Futures: Gold futures; Silver futures; Copper futures; Oil futures

- FOREX Rates: EUR-USD (Euro); GBP-USD (British Pound); USD-JPY (Japanese yen); USD-CNY (Chinese yuan)

- Bond Rates: US 5-year bond yield; US 10-year bond yield; Eurobund futures

- Dollar Index: Measures relative value of US dollar

The variables which are high effective in prediction of Buy Sell of gold are (Correlation Coefficients)

|

1. Gold Futures |

0.72 |

|

2. Silver Futures |

0.50 |

|

3. Copper Futures |

0.27 |

|

4. EUR-USD |

0.24 |

One can add these features to the dataset the accuracy will improve further.

Deploying this code to a fully function Gold Buy/Sell prediction

FYI this is full functional code , one can deploy this code on R Server write the integration code / bot which can get the real-time data of gold and call the predicted output from the model to get the buy/ sell/ hold signal. Preferable deploy to azure bot service and use azure ml to host algorithm.